Used NVIDIA V100 server GPU becomes low-cost local LLM accelerator

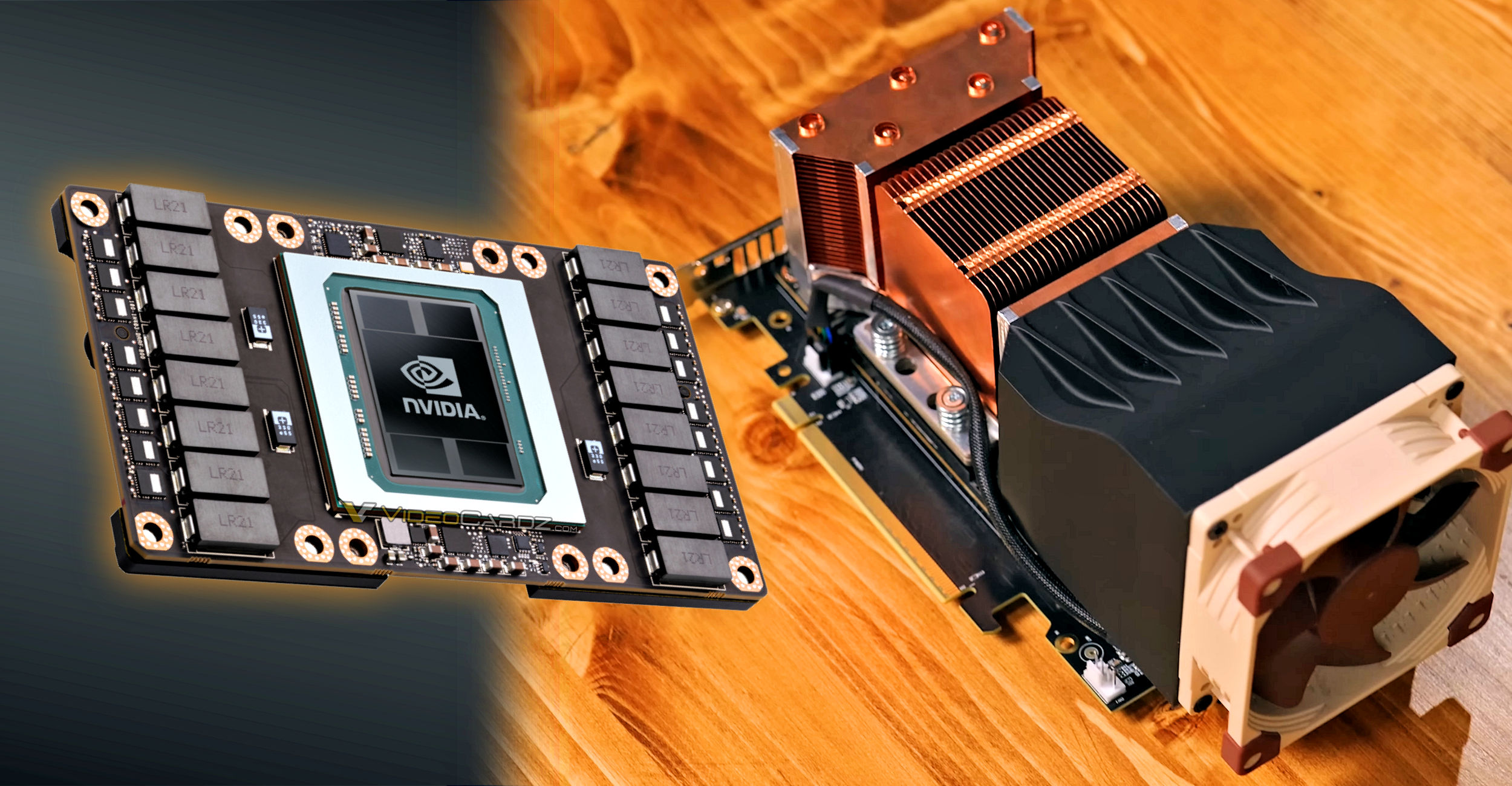

Hardware Haven has tested one of the stranger GPU setups we have seen recently. The creator bought an NVIDIA Tesla V100 in SXM2 form factor and installed it onto a PCIe adapter card, turning a server accelerator into something that can run in a normal desktop system.

To be honest, I had no idea that there were SMX to PCIe adapters like that or that they would just work. The V100 is not a gaming card and has no display outputs. It was released in the Volta generation and was designed for data centers, AI, HPC and other compute workloads. NVIDIA sold the V100 in PCIe and SXM2 versions, with 16GB or 32GB of HBM2 memory depending on configuration. The SXM2 version uses a socket-style server interface rather than a standard PCIe card format.

Source: Hardware Heaven

The setup cost around $200 for the 16GB V100 and the SXM2-to-PCIe adapter. Hardware Haven also added an 80mm Noctua fan and a 3D-printed shroud because the card relies on server airflow by default. With tax and cooling parts included, the full setup came to around $235.

Source: Hardware Heaven

In local LLM testing with Ollama, the V100 produced around 130 tokens/s in gpt-oss-20b. A Radeon RX 7800 XT 16GB system reached around 90 tokens/s in the same test. In another test using Gemma 4 E4B, the V100 reached 108 tokens/s, while an RTX 3060 12GB reached 76 tokens/s. The V100 system drew more power at default settings, but it still reached slightly better tokens per watt than the RTX 3060 system.

Source: Hardware Heaven

The more interesting result came after power limiting. At a 100W GPU power limit, the V100 system produced 95 tokens/s while drawing 170W from the wall. The RTX 3060 system, also limited to 100W, produced 68 tokens/s at 171W. This gave the older Volta accelerator a clear efficiency lead in this specific test. Idle power was still worse, with the V100 system drawing around 45W compared to 35W for the RTX 3060 system.

The V100 SXM2 setup needs an adapter, active cooling, integrated graphics or another display output, and some tolerance for unsupported enterprise hardware. It is not a plug-and-play gaming GPU. Still, for local AI experiments, this shows that old server accelerators can still offer good value if the price is low enough.

Source via Tom’s Hardware:

Hardware Haven: This Ridiculous $200 AI GPU Shouldn’t Be This Good 256,304 views